Twitter Scalability - Performance is user experience

This is not another post about how bad performance is on twitter. There are planty of other people talking about that. Instead this is a post on how you can define performance expectations in terms of the user experience.

For example:

- When I open a file that's very large I have a different performance expectation from when I open a small file.

- When I send a large package by mail I expect that it may take longer then a postcard or a letter (unless I pay more).

- When I travel I expect it'll take longer to get somewhere if it's further away.

These expectations are defined by the task at hand. Consider how the Twitter service is trying to scale and provide performance expectations.

- A person that is tracking 10 friends has the same user experience performance as a person that is tracking 10,000.

- A person that is being tracked by 10 friends has the perception that their messages are delivered as quickly as a person being tracked by 10,000

- My stream of data is updated in real time regardless of if I visit the site once a year or once an hour.

Basically the way the user experience is 'unfair' in it's balance of resources. Regular users of the site are punished by the super users. If you think of the site as a utility then each person using the site should be entitled to a somewhat equal share of the site resources.

The Twitter status blog makes no secret that they believe they should be architected as a messaging system. Each time a person posts a message it's 'sent' to the followers of that person. So each time a popular users posts a message it may be sent to 20,000 'inboxes' this can result in a mass ammount of back-end servrver requests to process the messages.

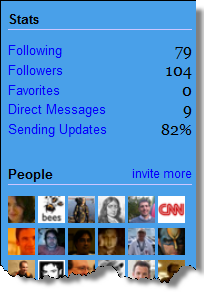

Consider how the performance expectations could be set through a simple user interface change:

By simply telling users that updates for that user are being sent and a percentage of those updates is complete it begins to set expectations for how the performance of the service will scale. For a user with 50-100 followers the sending performance will continue to be relativly quick but for users with tens of thousands of followers there is the expectation that it could take several minutes to get all updates out.

If you define the user experience you want to achieve you can architect your system with this in mind. The above implies that each user would have their own outgoing message queue. This is similar to an outbox in email. A collection of worker machines could then cycle through all the users and process a certain number of operations per user.

- If the user has messages in their outbox process X messages and deliver them to the appropriate end-points, sending to the most popular people first when possible.

- If the user has no messages in their outbox process Y people that they are following and pull messages from those peoples message queues.

The above is a fairly simple system that is similar to how a CPU kernel will perform process time-slicing allocating a consistent amount of time to each system process. Similarly each person is allocated an equal slice of the sending/fetching pie. The above process has the following nice characteristics:

- If you follow few and few follow you you'll have a very responsive experience.

- If you follow few and many follow you most of your time is spent sending updates.

- If you follow many and few follow you most of your time is spent fetching updates.

- If you follow many and many follow you your updates don't slow down the system they just get added to the queue. Additionally people with lots of followers are able to carry on conversations with other popular people. (The Techcrunch chatting with Scooble problem)

The systems would scale in a more linear way. Certain users would experience slow sending durring peak times but this would be more isolated and a problem that is easier to solve with more worker machines. The key thing is that by setting the user experience expectations of performance with an outbox or a sending status the users of the system can visually see the health and performance of the system.

Comments powered by Disqus.